A bug made it to production and now we have st(state) field set incorrectly for users with ids between 3 million(3000000) and 8 million(8000000). Let’s say we work for an e-commerce website. LOAD DATA LOCAL INFILE '/var/lib/data/user_data_fin.csv' INTO TABLE user FIELDS TERMINATED BY ',' Grant permissions to the fake data generator and generate 10 million rows as shown below. The gen_fake.py script generates fake data of the format id,name,is_active flag,state,country per row. add_argument( "-seed", type =int, default = 0, help = "seed")įile_name =args. "-num-records", type =int, default = 100, help = "Num of records to generate" ArgumentParser(description = "Generate some fake data") Let’s set up a project folder and generate some fake user data.ĭef gen_user_data(file_name: str, num_records: int, seed: int = 1) -> None:į " \n " We are going to be using docker to run a MySQL container and python faker library to generate fake data.

For our use case, let’s assume we are updating a user table which, if locked for a significant amount of time (say > 10s), can significantly impact our user experience and is not ideal. The usages of these approaches depend on your use case. There are other approaches such as swapping tables, running a standard update depending on your transaction isolation levels, etc. How does the update command lock records ? How to update millions of records without significantly impacting user experience ?

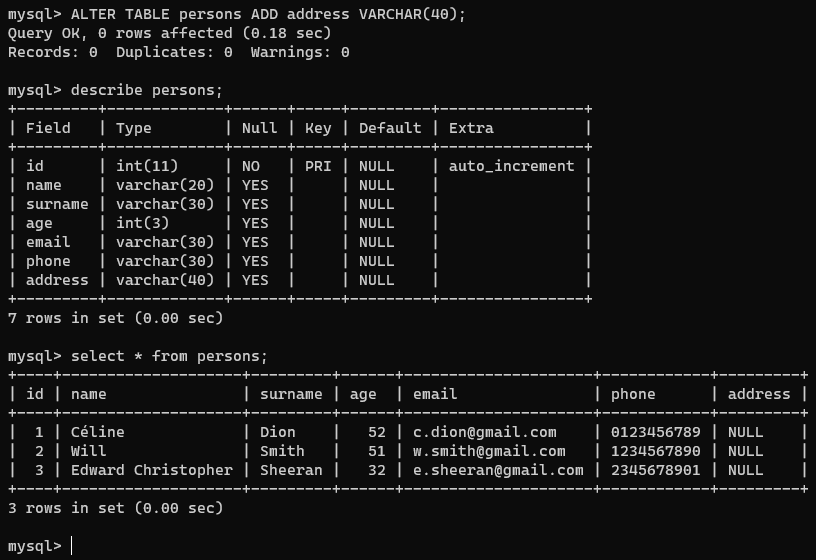

This way, only the records that are being updated at any point are locked. One common approach used to update a large number of records is to run multiple smaller update in batches. If those records are locked, they will not be editable(update or delete) by other transactions on your database. When updating a large number of records in an OLTP database, such as MySQL, you have to be mindful about locking the records.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed